Technical Articles

Article 3 : Video Latency (Video Delay)

Video Latency (Video Delay)

The term of 'video latency' in a video monitor can be defined as the transition time from the moment of a video signal is input to the monitor to the moment of this signal is turned into a visual light output through the display screen. This video delay causes a mismatch of video and audio output (widely known as 'Lip-Sync'), so minimizing the video delay is always important for broadcasters.

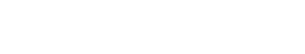

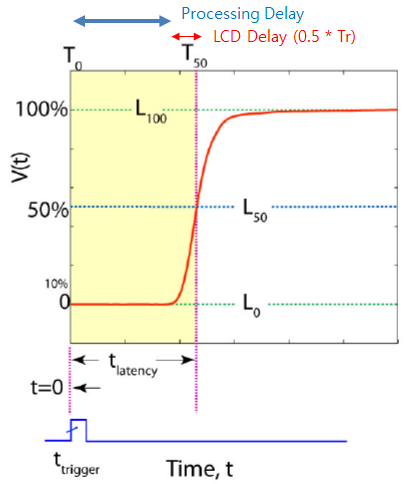

The video delay is caused in two parts - video processing board inside the monitor and display panel (ie. LCD). The light output from the displays such as CRT or OLED is fast enough to be ignored but LCD has relatively big delay which causes motion blur. The picture below explains the difference between these two delays. The processing inside the video board actually delays the delivery of the video signal to the display (LCD), but the delay in LCD means slow response of the Liquid Crystal and result in an optical output delay.

The video delay is caused in two parts - video processing board inside the monitor and display panel (ie. LCD). The light output from the displays such as CRT or OLED is fast enough to be ignored but LCD has relatively big delay which causes motion blur. The picture below explains the difference between these two delays. The processing inside the video board actually delays the delivery of the video signal to the display (LCD), but the delay in LCD means slow response of the Liquid Crystal and result in an optical output delay.

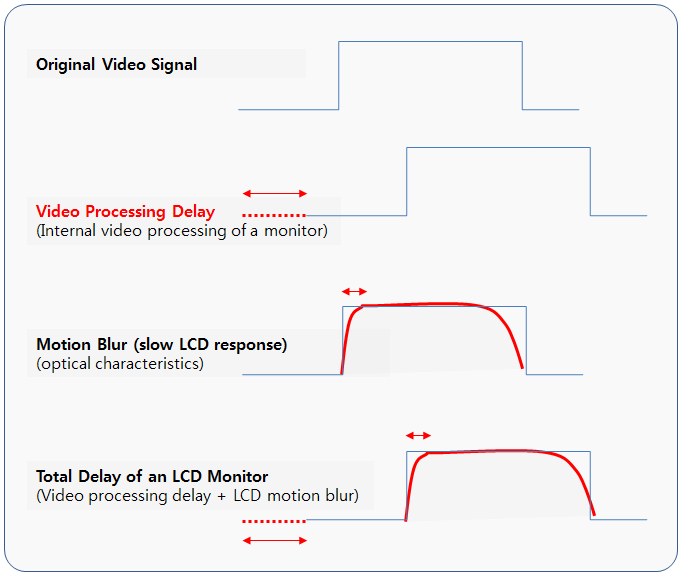

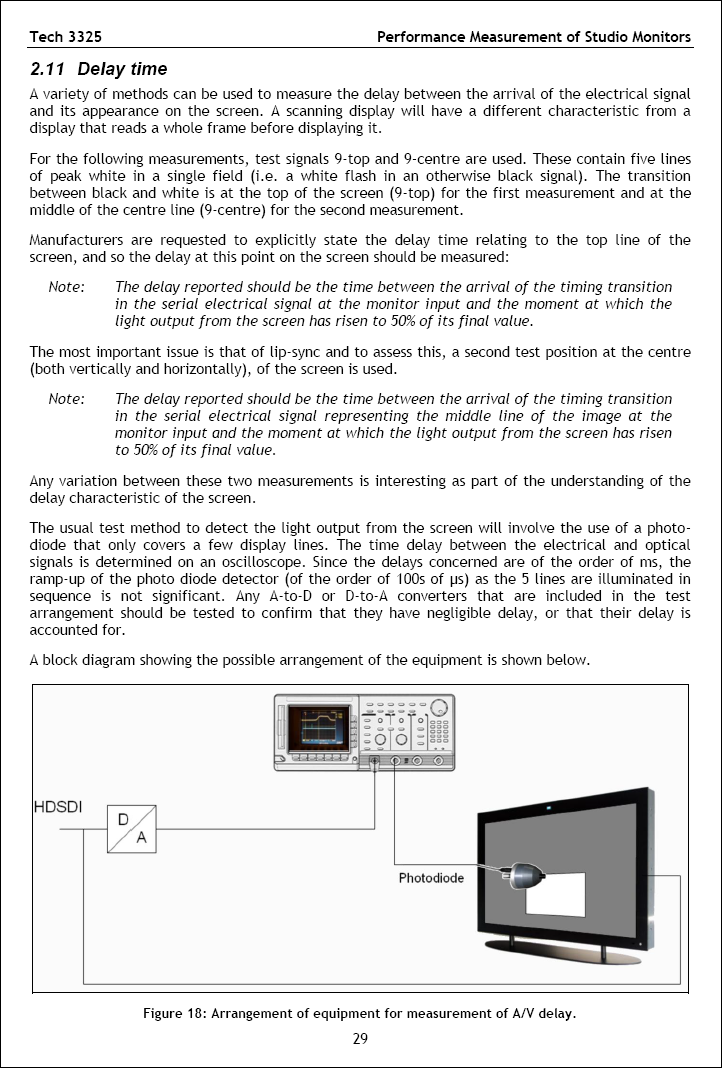

Therefore, in order to measure the right video latency (or delay), you need to set up a system to measure the exact time when you input the video signal into the monitor, and to measure the exact time when you see the light output from a monitor. The picture below illustrates a video delay measurement system which includes a optical measurement device. (Image courtesy of IDMS)

The total video delay will be shown on a Oscilloscope as below (Image courtesy of IDMS). Here, please be reminded that only 50% of the Rise Time (Tf) of the display is included, not the total response time of an LCD, which is usually (Tr+Tf) or Gray-to-Gray average (GTG). (Image courtesy of IDMS)

EBU Tech.3325 is another good reference for the definition and measurement method of video delay for broadcast monitors. It is also suggesting to include only 50% of the Rise Time (Tr) of the display being measured. The response time of a display is totally depending on the optical characteristics of the display, but as mentioned above, the response time of CRT and OLED can be ignored since they are very fast enough. But LCDs have various response time according to their technologies and cause motion blurs.

Anyway, even the response time of LCDs are far much greater than CRTs and OLEDs, still this optical delay from the display panel is relatively small when compared to the internal processing delay of the video board. As the resolution of the standard video is transiting from 2K to 4K, and the frame rate is also increasing from 50i/60i to 50p/60p, the video boards require more time to process the input video signal.

1) Video Scaler

One or two scaler ICs are usually included in a monitor in order to process various kinds of video format conversions including the resolution, frame rate and color space. Scalers also perform many types of functions such as color control, sharpness and de-interlacing. De-interlacing can be a major cause for internal processing delay.

So, in TVLogic, some of our high-end monitors have 'Fast Mode' which reduces the de-interlacing time in sacrifice of some level of de-interlacing quality. And for the some of our 4K monitors (LUM-310X-CI, 318G-CI, 313G-CI), input video formats of 4K (4096×2160) or UHD (3840×2160) are directly processed by FPGA, so there are no delay due to resolution conversion (only some delays due to frame rate conversion). UHD monitors such as LUM-242G and 242H also has no delay for UHD (3840×2160) resolution.

2) FPGA (Field Programmable Gate Array)

In broadcast monitors, FPGAs are widely used to process the input and output signals, analysis and visualization of the input video, other professional features and core processing for color control and calibration. Therefore video processing time increases as the video format gets heavier - higher resolution, higher frame rate and higher bit rate require more calculation and thus more processing time.

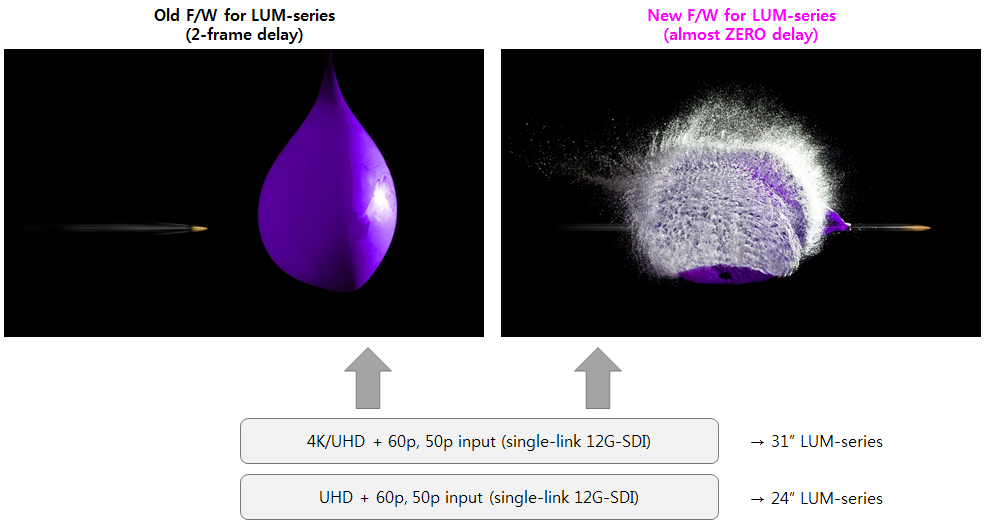

Recently, TVLogic has introduced an innovative DRC (Dynamic RAM Control) technology for 4K monitors starting with 3 upgraded models (LUM-310X-CI, 318G-CI and 313G-CI) in order to minimize the overall internal video processing delay. Now the video processing time is for 2 ~ 4 times faster than before, and 200 times faster for 4K or UHD input signals with 50p and 60p frame rate. It means almost Zero Latency (Zero Delay) for 4K/50p and 4K/60p video input coming through single 12G-SDI interface.